How to add functionality to Kubernetes with Operators

I started writing this blog post the day after I came home from KubeCon and CloudNativeCon 2022. The main thing I noticed was that the content of the talks has changed over the last few years.

Kubernetes’ new challenges

When looking at the topics of this year’s KubeCon / CloudNativeCon, it feels like a lot of questions about Kubernetes, types of cloud, logging tools and more are answered for most companies. This makes sense, because more and more organizations have already successfully adopted Kubernetes. Kubernetes is no longer considered the next big thing, but rather the logical choice. However, we’ve noticed (during the talks, but also in our own journey) that new problems and challenges have arisen, leading to other questions:

- How can I implement more automation?

- How can I control/lower the costs for these setups?

- Is there a way to expand on whatever exists and add my own functionalities to Kubernetes?

One of the possible ways to add functionalities to Kubernetes is using Operators. In this blog post, I will briefly explain how Operators work.

How Operators work

The concept of an operator is quite simple. I believe the easiest way to explain it is by actually installing an operator.

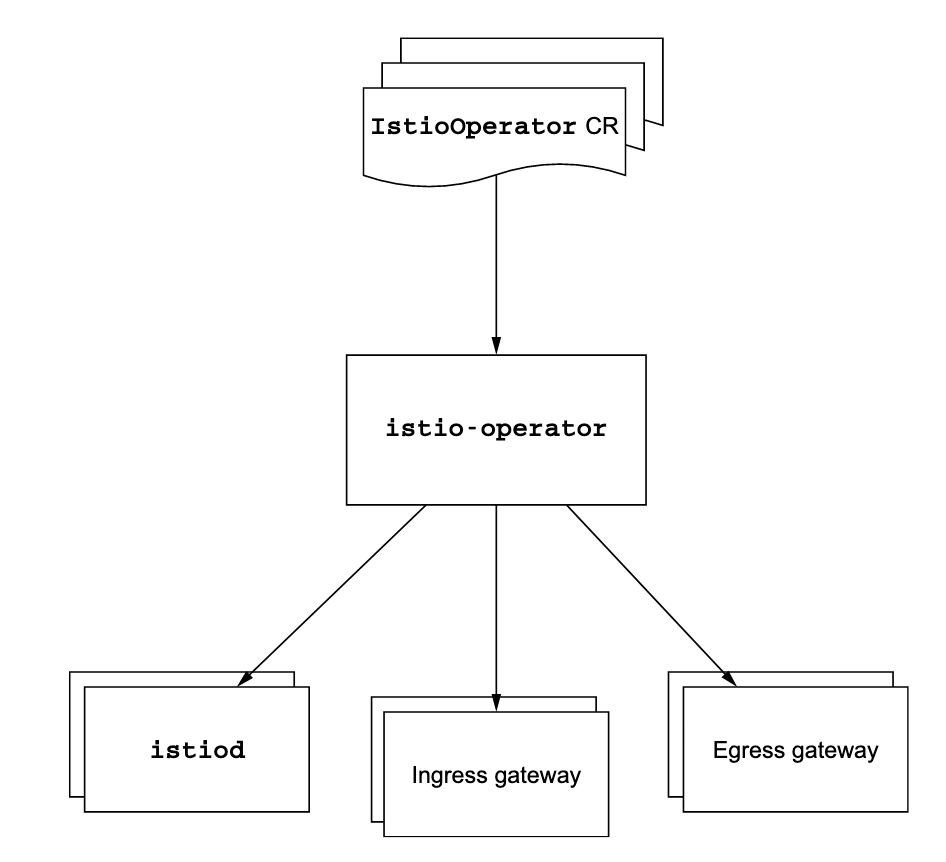

Within ACA, we use the istio operator. The exact steps of installing depends on the operator you are installing, but usually they’re quite similar.

First, install the istioctl binary on the machine that has access to the Kubernetes api. The next step is to run the command to install the operator.

curl -sL https://istio.io/downloadIstioctl | sh -

export PATH=$PATH:$HOME/.istioctl/bin

istioctl operator initThis will create the operator resource(s) in the istio-system namespace. You should see a pod running.

kubectl get pods -n istio-operator

NAMESPACE NAME READY STATUS RESTARTS AGE

istio-operator istio-operator-564d46ffb7-nrw2t 1/1 Running 0 20s

kubectl get crd

NAME CREATED AT

istiooperators.install.istio.io 2022-05-21T19:19:43ZAs you can see, a new CustomResourceDefinition called istiooperators.install.istio.io is created. This is a blueprint that specifies how resource definitions should be added to the cluster. To create config, we need to know what ‘kind’ of config the CRD expects to be created.

kubectl get crd istiooperators.install.istio.io -oyaml

…

status:

acceptedNames:

kind: IstioOperator

…Let’s create a simple config file.

kubectl apply -f - <<EOF

apiVersion: install.istio.io/v1alpha1

kind: IstioOperator

metadata:

namespace: istio-system

name: istio-controlplane

spec:

profile: minimal

EOFOnce the ResourceDefinition that contains the configuration is added to the cluster, the operator will make sure the resources in the cluster match whatever is defined in the configuration. You’ll see that new resources are created.

kubectl get pods -A

istio-system istiod-7dc88f87f4-rsc42 0/1 Pending 0 2m27sSince I run a small kind cluster, the istiod pod can’t be scheduled and is stuck in a Pending state. Let me explain the process first before changing this.

The istio-operator will keep watching the IstioOperator configuration file for changes. If changes are made to the file, it will only make the changes that are required to update the resources in the cluster to match the state specified in the configuration file. This behavior is called reconciliation.

Let’s watch the IstioOperator configuration file status. Note that it’s created in the istio-system namespace.

kubectl get istiooperator -n istio-system

NAME REVISION STATUS AGE

istio-controlplane RECONCILING 3mAs you can see, this is still reconciling, because the pod can’t start. After some time, it’ll go in an ERROR state.

kubectl get istiooperator -n istio-system

NAME REVISION STATUS AGE

istio-controlplane ERROR 6m58sYou can also check the istio-operator log for useful information.

kubectl -n istio-operator logs istio-operator-564d46ffb7-nrw2t --tail 20

- Processing resources for Istiod.

- Processing resources for Istiod. Waiting for Deployment/istio-system/istiod

✘ Istiod encountered an error: failed to wait for resource: resources not ready after 5m0s: timed out waiting for the condition.

Since I’m running a small demo cluster, I’ll update the memory limit so the POD can be scheduled. This is done within the spec: part of the IstioOperator definition.

kubectl -n istio-system edit istiooperator istio-controlplane

spec:

profile: minimal

components:

pilot:

k8s:

resources:

requests:

memory: 128Mi

The istiooperator will go back to a RECONCILING state.

kubectl get istiooperator -n istio-system

NAME REVISION STATUS AGE

istio-controlplane RECONCILING 11mAnd after some time, it becomes HEALTHY.

kubectl get istiooperator -n istio-system

NAME REVISION STATUS AGE

istio-controlplane HEALTHY 12mYou can see the istiod pod is running.

NAMESPACE NAME READY STATUS

istio-system istiod-7dc88f87f4-n86z9 1/1 RunningApart from the istiod deployment, a lot of new CRDs are added as well.

authorizationpolicies.security.istio.io 2022-05-21T20:08:05Z

destinationrules.networking.istio.io 2022-05-21T20:08:05Z

envoyfilters.networking.istio.io 2022-05-21T20:08:05Z

gateways.networking.istio.io 2022-05-21T20:08:05Z

istiooperators.install.istio.io 2022-05-21T20:07:01Z

peerauthentications.security.istio.io 2022-05-21T20:08:05Z

proxyconfigs.networking.istio.io 2022-05-21T20:08:05Z

requestauthentications.security.istio.io 2022-05-21T20:08:05Z

serviceentries.networking.istio.io 2022-05-21T20:08:05Z

sidecars.networking.istio.io 2022-05-21T20:08:05Z

telemetries.telemetry.istio.io 2022-05-21T20:08:05Z

virtualservices.networking.istio.io 2022-05-21T20:08:05Z

wasmplugins.extensions.istio.io 2022-05-21T20:08:06Z

workloadentries.networking.istio.io 2022-05-21T20:08:06Z

workloadgroups.networking.istio.io 2022-05-21T20:08:06ZHow the operator works - summary

As you can see, this is a very easy way to quickly set up istio within our cluster. In short, these are the steps:

- Install the operator

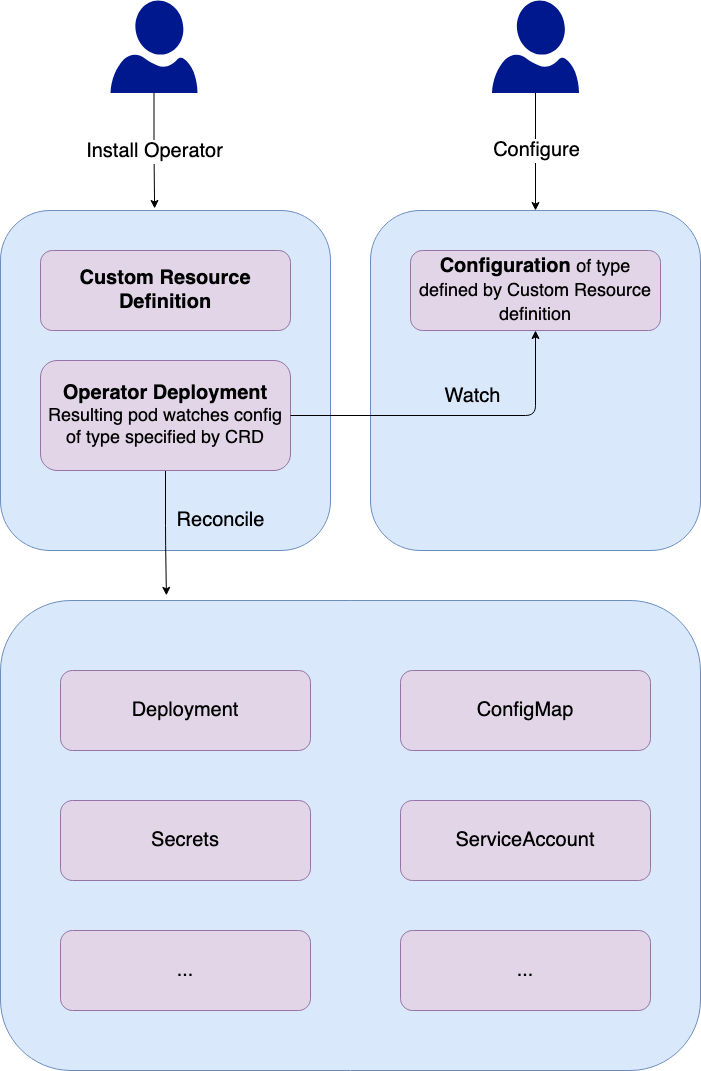

- One (or more) CustomResourceDefinitions is added that provides a blueprint for the objects that can be created/managed. A deployment is created, which in turn creates a Pod that monitors the Configurations of the kinds that are specified by the CRD.

- The user adds configuration to the cluster, with its type specified by the CRD.

- The operator POD notices the new configuration and takes all steps that are required to make sure the cluster is in the desired state specified by the configuration.

Benefits of the operator approach

The operator approach makes it easy to package a set of resources like Deployments, Jobs, CustomResourceDefinitions. This way, it’s easy to add additional behavior and capabilities to Kubernetes.

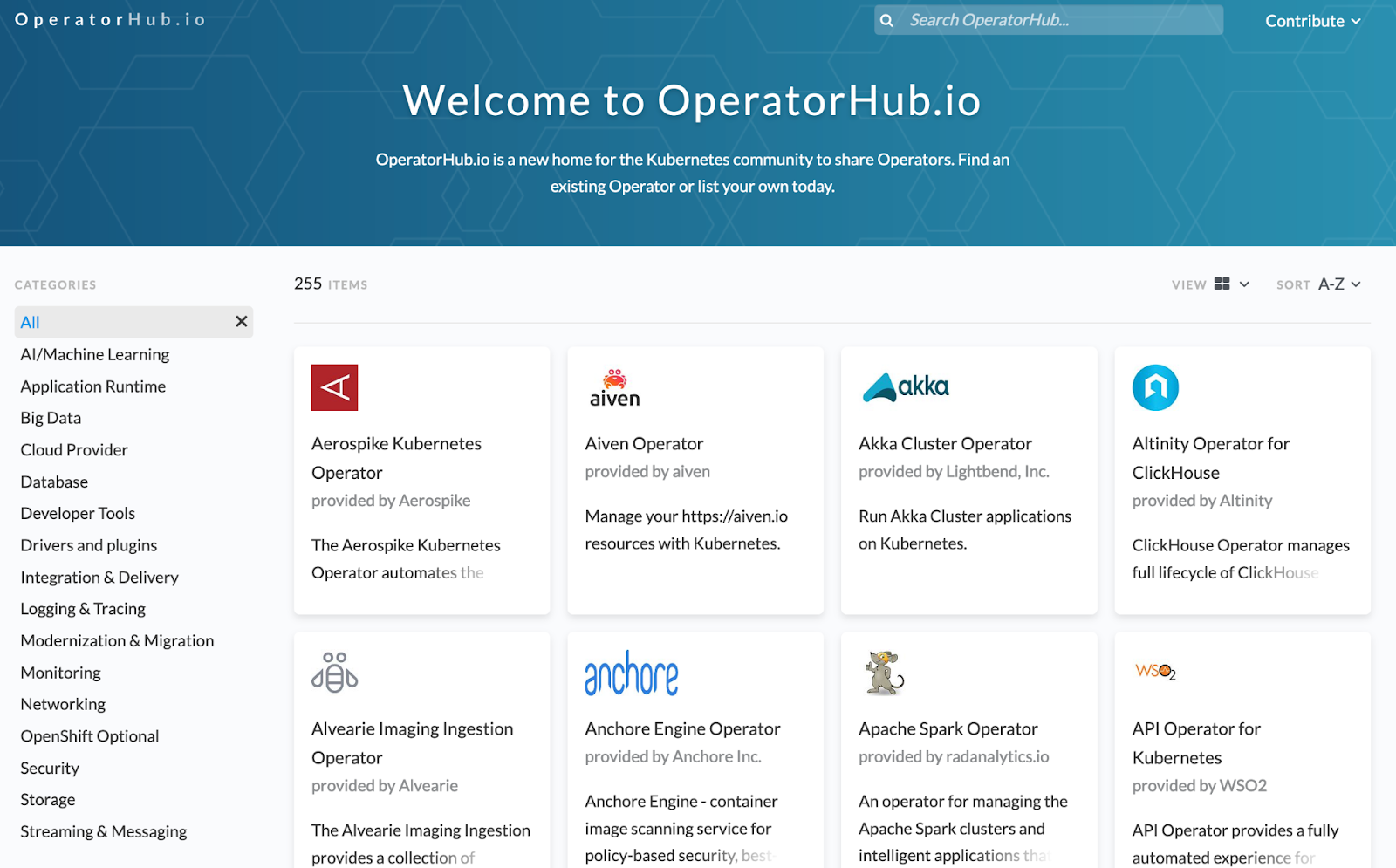

There’s a library which lists the available operators which can be found at https://operatorhub.io/, counting 255 operators at the moment of writing. The operators are usually installed with just a few commands or lines of code.

It’s also possible to create your own operators. It might make sense to package a set of deployments, jobs, CRDs, … that provide a specific functionality as an operator. The operator can be handled as operators and use pipelines for CVE validations, E2E tests, rollout to test environments, and more before a new version is promoted to production.

Pitfalls

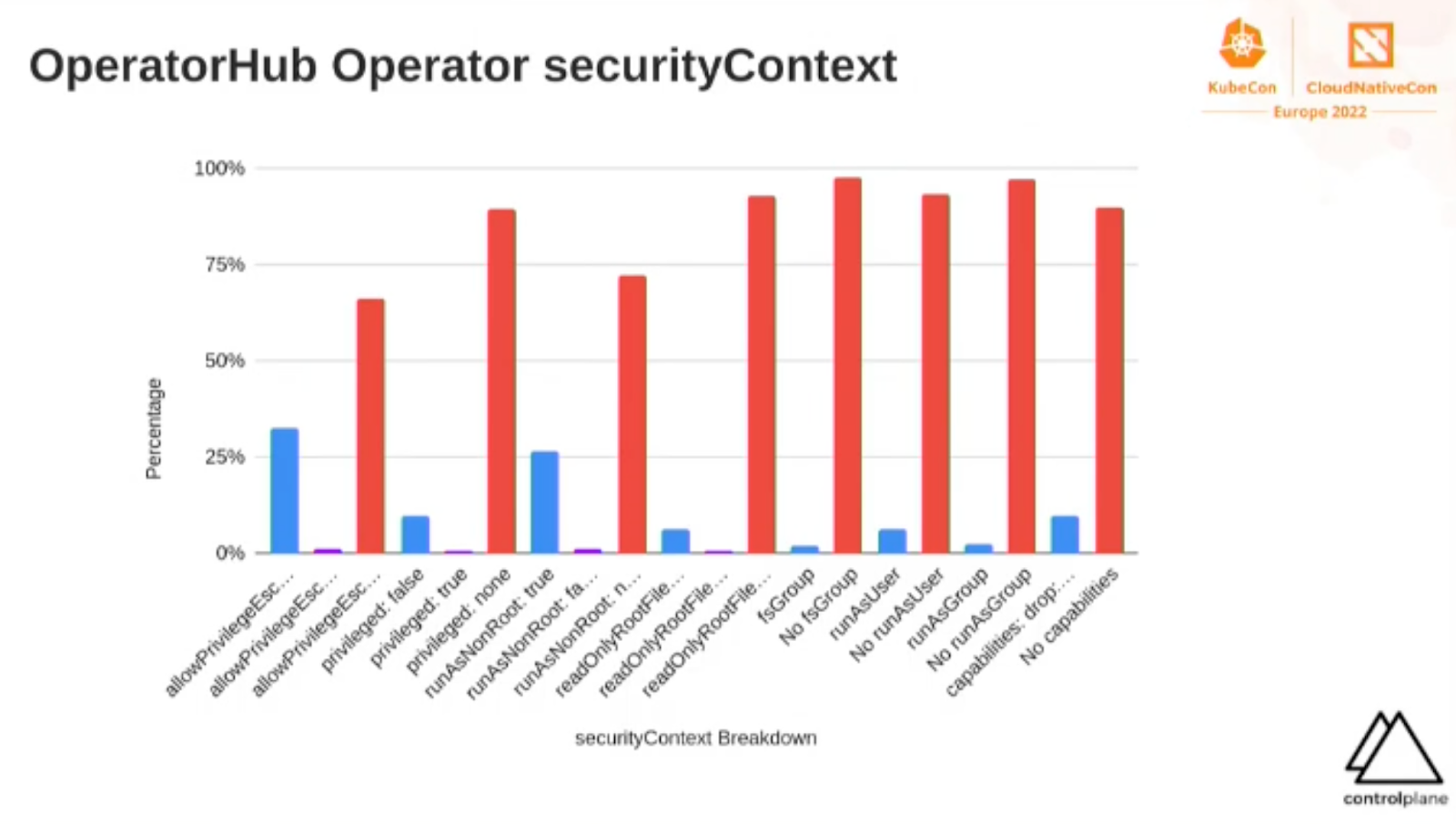

We have been using Kubernetes for a long time within the ACA Group and have collected some security best-practices during this period. We’ve noticed that one-file-deployments and helm charts from the internet are usually not as well configured as we want them to be. Think about RBAC rules that give too many permissions, resources not currently namespaced or containers running as root.

When using operators from operatorhub.io, you basically trust the vendor or provider to follow security best-practices. However … one of the talks at KubeCon 2022 that made the biggest impression on me, stated that a lot of the operators have issues regarding security. I would suggest you to watch Tweezering Kubernetes Resources: Operating on Operators - Kevin Ward, ControlPlane before installing.

Another thing we’ve noticed is that using operators can speed up the process to implement new tools and features. Be sure to read the documentation that was provided by the creator of an operator before you dive into advanced configuration. It might be possible that not all features are actually implemented on the CRD that is created by the operator. However, it is bad practice to directly manipulate the resources that were created by the operator. The operator is not tested against your manual changes and this might cause inconsistencies. Additionally, new operator versions might (partly) undo your changes, which also might cause problems.

At that point, you’re basically stuck, unless you create your own operator that provides additional features. We’ve also noticed that there is no real ‘rule book’ on how to provide CRDs and documentation is not always easy to find or understand.

Conclusion

Operators are currently a hot topic within the Kubernetes community. The number of available operators is growing fast, making it easy to add functionality to your cluster. However, there is no rule book or a minimal baseline of quality. When installing operators from the operatorhub, be sure to check the contents or validate the created resources on a local setup. We expect to see some changes and improvements in the near future, but at this point they can be very useful already.