KubeCon / CloudNativeCon 2022 highlights!

Didn’t make it to KubeCon this year? Read along to find out our highlights of the KubeCon / CloudNativeCon conference this year by ACA Group’s Cloud Native team!

What is KubeCon / CloudNativeCon?

KubeCon (Kubernetes Conference) / CloudNativeCon, organized yearly at EMAE by the Cloud Native Computing Foundation (CNCF), is a flagship conference that gathers adopters and technologists from leading open source and cloud native communities in a location. This year, approximately 5,000 physical and 10,000 virtual attendees showed up for the conference.

CNCF is the open source, vendor-neutral hub of cloud native computing, hosting projects like Kubernetes and Prometheus to make cloud native universal and sustainable.

Bringing 300+ sessions from partners, industry leaders, users and vendors on topics covering CI/CD, GitOps, Kubernetes, machine learning, observability, networking, performance, service mesh and security. It's clear there's always something interesting to hear about at KubeCon, no matter your area of interest or level of expertise!

It's clear that the Cloud Native ecosystem has grown to a mature, trend-setting and revolutionizing game-changer in the industry. All is initiated on the Kubernetes trend and a massive amount of organizations that support, use and have grown their business by building cloud native products or using them in mission-critical solutions.

2022's major themes

What struck us during this year’s KubeCon were the following major themes:

- The first was increasing maturity and stabilization of Kubernetes and associated products for monitoring, CI/CD, GitOps, operators, costing and service meshes, plus bug fixing and small improvements.

- The second is a more elaborate focus on security. Making pods more secure, preventing pod trampoline breakouts, end-to-end encryption and making full analysis of threats for a complete k8s company infrastructure.

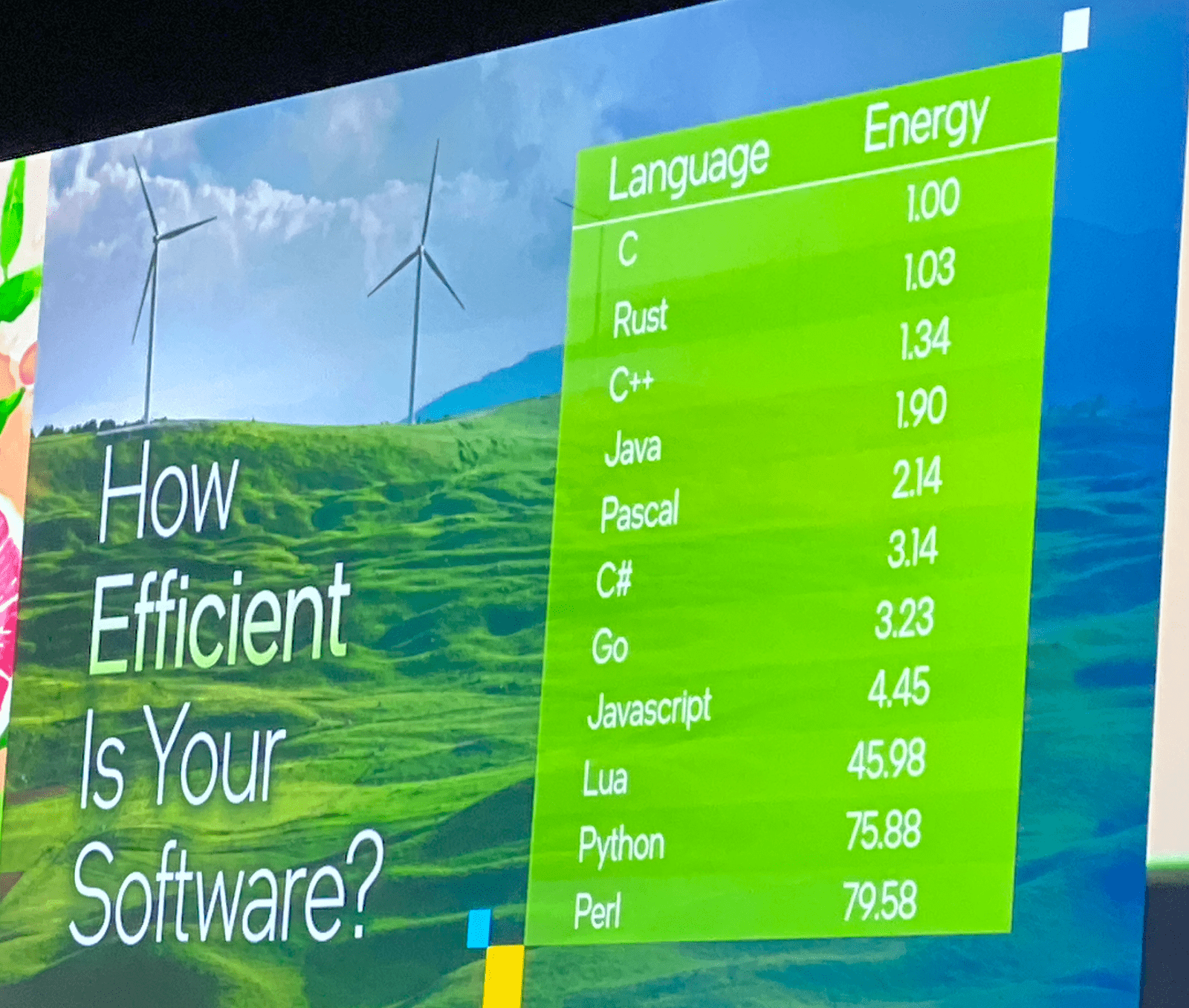

- The third is sustainability and a growing conscience that systems running k8s and the apps on it consume a lot of energy while 60 to 80% of CPU remains unused.

- Even languages can be energy (in)efficient. Java is among the most power efficient, while Python apparently is far less due to the nature of the interpreter / compiler. Companies all need to plan and work on decreasing energy footprint in both applications and infrastructure. Autoscaling will play an important role in achieving this.

Sessions highlights

Sustainability

Data centers worldwide consume 8% of all generated electricity worldwide. So we'll need to reflect on

- the effective usage of our infrastructure and avoid idle time (on average CPU utilization is only between 20 and 40%)

- when servers are running, make them work with running as many workloads as possible

- shut down resources when they are not needed by applying autoscaling approaches

- the coding technology used in your software, some programming languages use less CPU.

CICD / GitOps

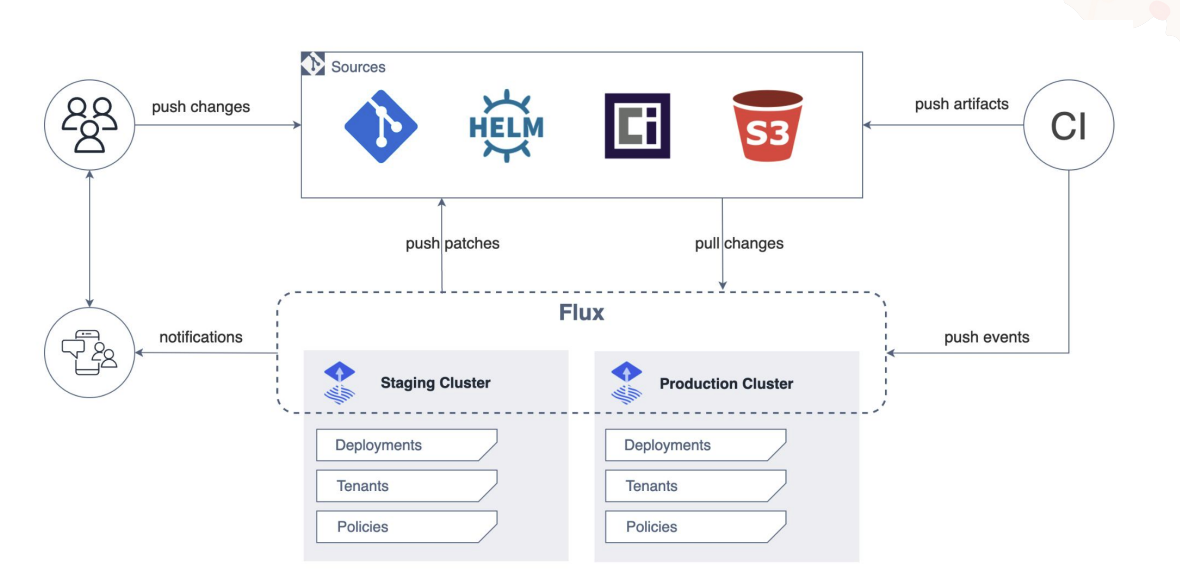

GitOps automates infrastructure updates using a Git workflow with continuous integration (CI) and continuous delivery (CI/CD). When new code is merged, the CI/CD pipeline enacts the change in the environment.

Flux is a great example of this. Flux provides GitOps for both apps and infrastructure. It supports GitRepository, HelmRepository, HelmRepository and Bucket CRD as the single source of truth.

With A/B or Canary deployments, it makes it easy to deploy new features without impacting all the users. When the deployment fails, it can easily roll back.

Checkout the KubeCon schedule page for more information!

Kubernetes

Even though Kubernetes 1.24 was released a few weeks before the start of the event, not many talks were focused on the Kubernetes core. Most talks were focused on extending Kubernetes (using APIs, controllers, operators, …) or best practices around security, CI/CD, monitoring … for whatever will run within the Kubernetes cluster. If you're interested in the new features that Kubernetes 1.24 has to offer, you can check the official website.

Observability

Getting insights on how your application is running in your cluster is crucial, but not always practical. This is where eBPF comes into play, which is used by tools such as Pixie to collect data without any code changes.

Check out the KubeCon schedule page for more information!

FinOps

Now that more and more people are using Kubernetes, a lot of workloads have been migrated. All these containers have a footprint. Memory, CPU, storage, … needs to be allocated, and they all have a cost. Cost management was a recurring topic during the talks. Using autoscaling (adding but also removing capacity) to match the required resources and identifying unused resources are part of this new movement. New services like 'kubecost' are becoming increasingly popular.

Performance

One of the most common problems in a cluster is not having enough space or resources. With the help of a Vertical Pod Autoscaler (VPA) this can be a thing of the past. A VPA will analyze and store Memory and CPU metrics/data to automatically adjust to the right CPU and memory request limits. The benefits of this approach will let you save money, avoid waste, size optimally the underlying hardware, tune resources on worker nodes and optimize placements of pods in a Kubernetes cluster.

Check out the KubeCon schedule page for more information!

Service mesh

We all know it's extremely important to know which application is sharing data with other applications in your cluster. Service mesh provides traffic control inside your cluster(s). You can block or permit any request that is sent or received from any application to other applications. It also provides Metrics, Specs, Split, ... information to understand the data flow.

In the talk, Service Mesh at Scale: How Xbox Cloud Gaming Secures 22k Pods with Linkerd, Chris explains why they choose Linkerd and what the benefits are of a service mesh.

Check out the KubeCon schedule page for more information!

Security

Trampoline pods, sounds fun, right? During a talk by two security researchers from Palo Alto Networks, we learned that they aren’t all that fun. In short, these are pods that can be used to gain cluster admin privileges. To learn more about the concept and how to deal with them, we strongly recommend taking a look at the slides on the KubeCon schedule page!

Lachlan Evenson from Microsoft gave a clear explanation of Pod Security in his The Hitchhiker's Guide to Pod Security talk.

Pod Security is a built-in admission controller that evaluates Pod specifications against a predefined set of Pod Security Standards and determines whether to admit or deny the pod from running.

— Lachlan Evenson, Principal Program Manager at Microsoft

Pod Security is replacing PodSecurityPolicy starting from Kubernetes 1.23. So if you are using PodSecurityPolicy, now might be a good time to further research Pod Security and the migration path. In version 1.25, support for PodSecurityPolicy will be removed.

If you aren’t using PodSecurityPolicy or Pod Security, it is definitely time to further investigate it!

Another one of the recurring themes of this KubeCon 2022 were operators. Operators enable the extension of the Kubernetes API with operational knowledge. This is achieved by combining Kubernetes controllers and watched objects that describe the desired state. They introduce Custom Resource Definitions, custom controllers, Kubernetes or cloud resources and logging and metrics, making life easier for Dev as well as Ops.

However, during a talk by Kevin Ward from ControlPlane, we learned that there are some risks. Additionally, and more importantly, he also talked about how we can identify those risks with tools such as BadRobot and an operator thread matrix. Checkout the KubeCon schedule page for more information!

Scheduling

Telemetry Aware Scheduling helps you schedule your workloads based on metrics from your worker nodes. You can for example set a rule to not schedule new workloads on worker nodes with more than 90% used memory. The cluster will take this into account when scheduling a pod. Another nice feature of this tool is that it can also reschedule pods to make sure your rules are kept in line.

Checkout the KubeCon schedule page for more information!

Cluster autoscaling

A great way for stateless workloads to scale cost effectively is to use AWS EC2 Spot, which is spare VM capacity available at a discount. To use Spot instances effectively in a K8S cluster, you should use aws-node-termination-handler. This way, you can move your workloads off of a worker node when Spot decides to reclaim it. Another good tool is Karpenter, a tool to provision Spot instances just in time for your cluster. With these two tools, you can cost effectively host your stateless workloads!

Check out the KubeCon schedule page for more information!

Event-driven autoscaling

Using the Horizontal Pod Autoscaler (HPA) is a great way to scale pods based on metrics such as CPU utilization, memory usage, and more.

Instead of scaling based on metrics, Kubernetes Event Driven Autoscaling (KEDA) can scale based on events (Apache Kafka, RabbitMQ, AWS SQS, …) and it can even scale to 0 unlike HPA.

Check out the KubeCon schedule page for more information!

Wrap-up

We had a blast this year at the conference. We left with an inspired feeling that we'll no doubt translate into internal projects, apply for new customer projects and discuss with existing customers where applicable. Not only that, but we'll brief our colleagues and organize an afterglow session for those interested back home in Belgium.

If you appreciated our blog article, feel free to drop us a small message. We are always happy when the content that we publish is also of any value or interest to you. If you think we can help you or your company in adopting Cloud Native, drop me a note at peter.jans@aca-it.be.

As a final note we'd like to thank Mona for the logistics, Stijn and Ronny for this opportunity and the rest of the team who stayed behind to keep an eye on the systems of our valued customers.